The Bias You're Sure You Don't Have

We all think we're less biased than the average person. The math says we can't all be right — and that gap shapes everything we read, hear, and believe.

The bias you're sure you don't have

Ask any group of people whether they're more or less biased than the average person. Almost everyone says: less. Significantly less.

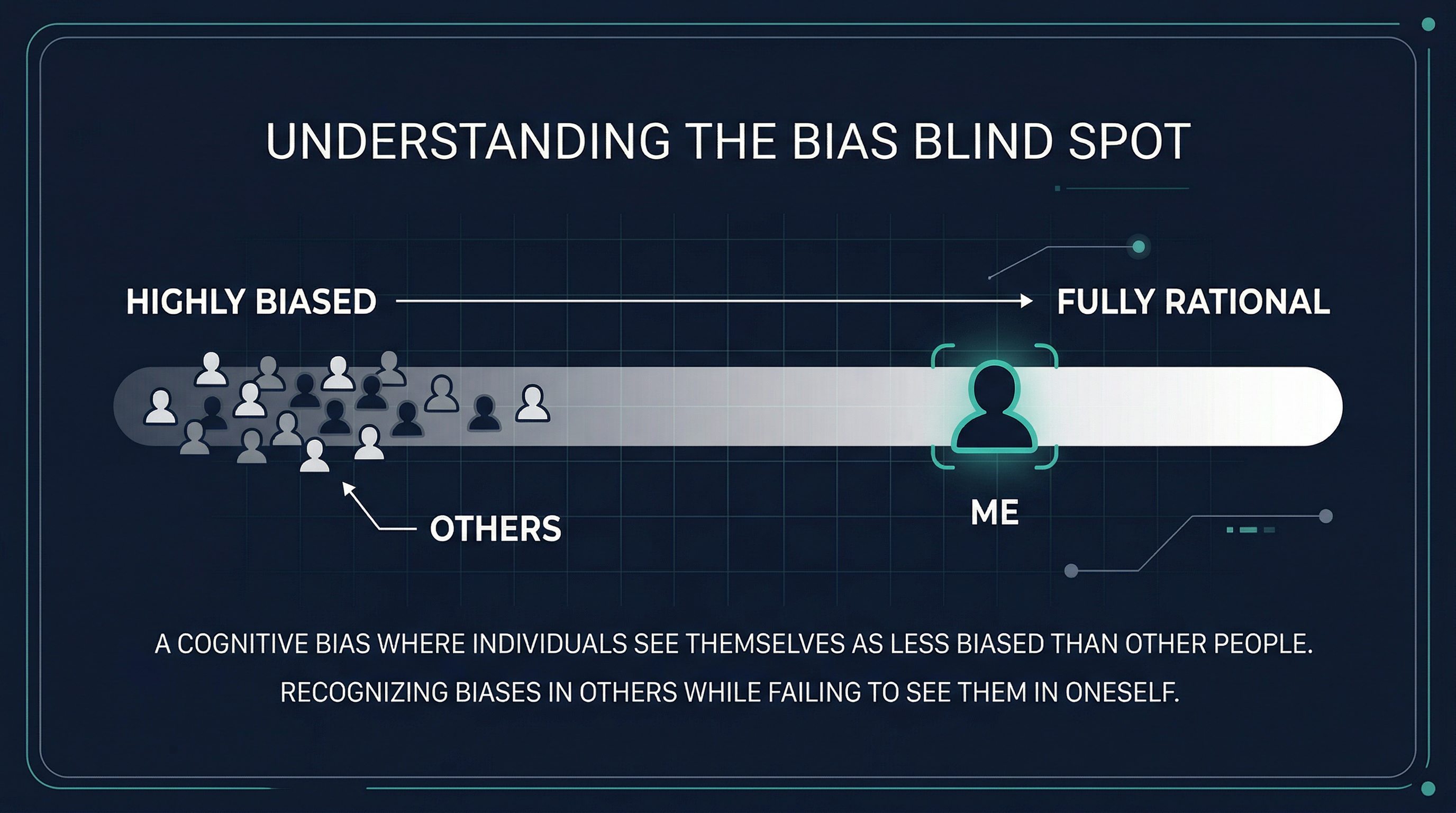

The problem? We can't all be right. This quirk has a name — the bias blind spot — and it's one of the most important things to understand if you care about thinking well.

Confirmation bias — our tendency to seek out information that confirms what we already believe, and quietly dismiss what challenges it — is one of the most well-documented forces in human cognition. A 2024 study of 1,479 people found that simply becoming aware of confirmation bias made people measurably more resistant to misinformation. But there's a catch: the people who most resisted the intervention were those who already believed they were objective.

The first bias, in other words, is believing you don't have one.

What a narrow information diet actually costs you

When we only encounter information that agrees with us, our thinking doesn't just get more confident — it gets more brittle. We lose the ability to steelman an opposing view, notice where our own reasoning is weak, or update when new evidence arrives.

Political polarisation is the most visible symptom, but the same mechanism affects how we think about science, health, money, and relationships. A 2025 study found that AI tools can make this worse: when you interact with a chatbot in ways shaped by your existing beliefs, it tends to adapt to you — reinforcing your worldview rather than challenging it.

If we want AI to make us smarter, we need to actively resist this pull.

Three things you can do about it

1. Seek out the strongest version of the opposing view. Not to agree with it — but to understand it well enough to argue it yourself. If you can't, you probably don't understand the issue as well as you think.

2. Use tools designed to surface your blind spots. Ground News is a good example: it shows you how outlets across the political spectrum cover the same story, and flags stories being covered almost exclusively by one side. It's a useful mirror for noticing what you're not seeing.

3. Notice the feeling of instant dismissal. When an idea makes you immediately roll your eyes, that's worth pausing on. It might just be a bad idea — or it might be your confirmation bias talking.

Being smart isn't just about knowing more. It's about understanding how you know what you know — and staying genuinely curious about where your blind spots might be.

Want to think out loud about this? We'd love to hear from you.